Four months ago I started using Claude Code for my personal work. But I'm not a developer and Claude Code is built for developers. I didn't want to use it to write code; I wanted to use it to help me think.

Those four months have been pretty torrid at times.

That's probably a strange thing to admit, but I want to be honest about this. Claude Code was designed by software engineers for software engineers (the hint is in the name you might say). Many of its functions operate in ways designed for developers (e.g. setup step #1: link up your GitHub account… GitWhat?). And most of what's written about it assumes you're writing software. The internet is full of articles written by people about how they vibe coded some new spectacular thing in two days.

But my background is in banking, customer experience and digital innovation. Now I'm running a startup. I couldn't write a line of code if my life depended on it. I wanted to figure out how to use Claude Code to improve my output across the work of creating and running a business: research, drafting, analysis, decision-making.

Getting started was daunting. Material was easy to find, but a lot of it felt impenetrable, or generic, or written for someone further along than me. I couldn't tell which of the various 'playbooks' were right for me, if any. I didn't know where to begin. So I did what any self-respecting person would do in similar circumstances: I jettisoned the instructions and just started to use it and make mistakes. What could possibly go wrong?

Yes, that was maybe reckless. And quite possibly foolish. But (several disasters notwithstanding) I think it turned out to be the most useful thing I did.

Because the first real realisation, about 4-5 days in (after I lost a day's work), was that I could ask Claude about the problems I was having with it. Not in the abstract, but in specific terms. That just happened… why? How do we stop that happening again? What would a better approach look like next time? Of course, Claude doesn't understand what went wrong any more than a search engine understands your query. But the responses were specific enough and good enough to make me stop and think properly about what had happened.

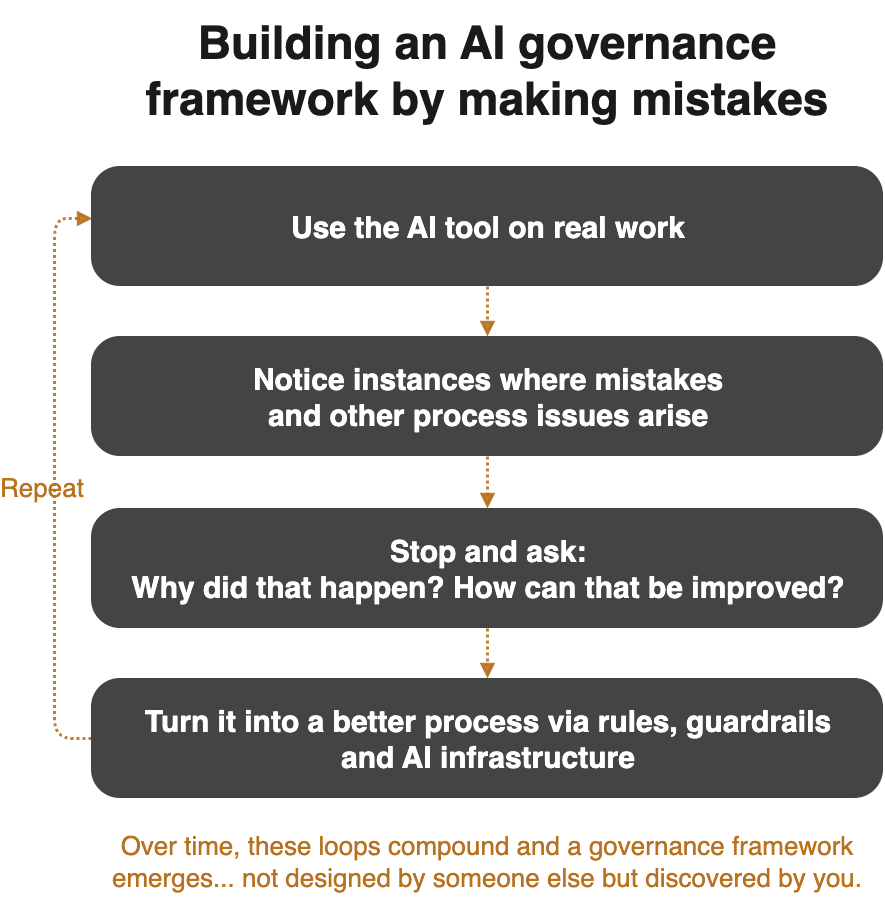

And that realisation started a loop I didn't expect… I'd try something; notice a mistake or an issue; pause what I was doing; ask Claude what happened and why; have a (sometimes long and winding) interaction; decide what to do about it; and then try and fix or improve it. Sometimes that became a rule. Sometimes it was just a mental note that shaped how I worked the next time.

Over months, those loops compounded. The result is a working framework that sits in a set of rules files most of which I helped craft, word by word. Rules like: don't act on plausible answers; show your evidence before making a recommendation; explain what you're about to do before you do it, and wait for me to confirm; checkpoint your working state every hour so we don't lose context; or my favourite: don't engage in performative agreement bordering on sycophancy. There's a bunch of other infrastructure that I didn't know I needed until I did. Whether those rules are actually good is a fair question. But I can say that in three and a half months of intensive daily use, I haven't lost data, published something unintended or made a decision I had to reverse because the AI led me astray.

None of that was designed up front. I didn't sit down and copy a governance framework. It emerged, one incident at a time, from me talking to Claude… not about the job at hand, but about the process of working with Claude on the job at hand.

I've come to think this is the underrated thing about working with AI as a non-technical person. You don't have to know the right 'playbook'. You just have to be willing to give it a go, use the tool and then (here's the key) notice what's going wrong and then notice your noticing.

All that noticing turns into rules and other things that overcome the problems. And you don't have to write all the rules; the AI can help you. It can help you apply them. It can even remind you to follow them.

Which means the path to using AI is available to anyone willing to start, make mistakes and build up their own standards by paying attention. Don't take it from me, just ask the people I've been talking to about how to apply all this in non-technical domains like accountancy, history, data analysis, counselling and report writing. The transformative realisation here is that this isn't a tool just for developers; it's a tool for anyone engaged in intellectual content generation.

So this is the start of a series of articles about what I'm learning working this way. Things like: what happens when you take a tool designed for developers and use it to run a business, without being a developer. What have I had to invent because nobody else had written it down for people like me? What could possibly go wrong?

But there's a deeper question behind all of this. It's not just that AI tools can make people more efficient or equip us all to be vibe coders. It's that working with AI tools requires us to reconsider how we work; how we reason; how we develop ideas. It raises questions about the nature of knowledge and has implications for the process of critical reasoning. That's something I want to explore properly, and it's what this series is really about.

If that's interesting, please feel free to get in touch.